Science issues – whether connected with climate, childhood vaccines or new techniques in biotechnology – are part of the fabric of civic life, raising a range of social, ethical and policy issues for the citizenry. As members of the scientific community gather at the annual meeting of the American Association for the Advancement of Science (AAAS) this week, here is a roundup of key takeaways from our studies of U.S. public opinion about science issues and their effect on society. If you’re on Twitter, follow @pewscience for more science findings.

How we did this

The data for this post was drawn from multiple different surveys. The most recent was a survey of 3,627 U.S. adults conducted Oct. 1 to Oct. 13, 2019. This post also draws on data from surveys conducted in January 2019, December 2018, April-May 2018 and March 2016. All surveys were conducted using the American Trends Panel (ATP), an online survey panel that is recruited through national, random sampling of residential addresses. This way nearly all U.S. adults have a chance of being selected. The survey is weighted to be representative of the U.S. adult population by gender, race, ethnicity, education and other categories. Read more about the ATP’s methodology.

Following are the questions and responses for surveys used in this post, as well as each survey’s methodology:

- October 2019 survey: Questions | Methodology

- January 2019 survey: Questions | Methodology

- December 2018 survey: Questions | Methodology

- April-May 2018 survey: Questions | Methodology

- March 2016 survey: Questions | Methodology

[bignumber]Some public divides over science issues are aligned with partisanship, while many others are not. Science issues can be a key battleground for facts and information in society. Climate science has been part of an ongoing discourse around scientific evidence, how to attribute average temperature increases in the Earth’s climate system, and the kinds of policy actions needed. While public divides over climate and energy issues are often aligned with political party affiliation, public attitudes on other science-related issues are not.

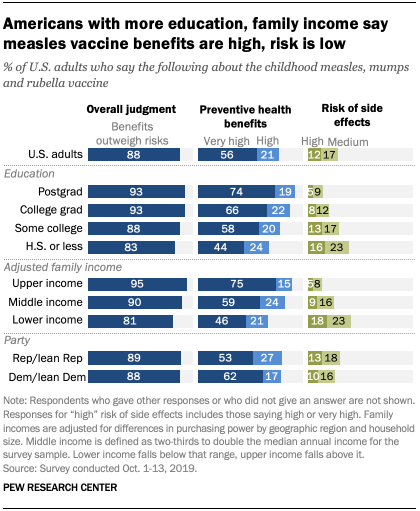

For example, there are differences in public beliefs around the risks and benefits of childhood vaccines. Such differences arise amid civic debates about the spread of false information about vaccines. While such beliefs have important implications for public health, they are not particularly political in nature.

In fact, Republicans and independents who lean to the GOP are just as likely as Democrats and independents who lean to the Democratic Party to say that, overall, the benefits of the measles, mumps and rubella vaccine outweigh the risks (89% and 88% respectively).

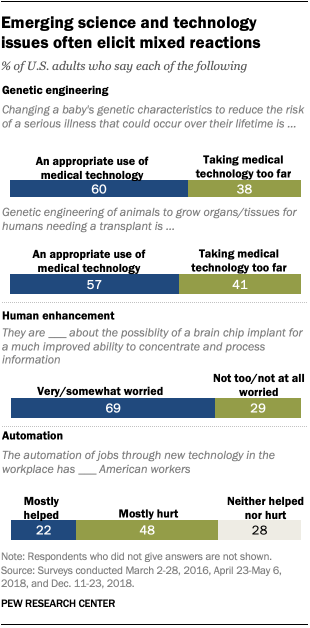

[bignumber]Americans have differing views about some emerging scientific and technological developments. Scientific and technological developments are a key source of innovation and, therefore, change in society. Pew Research Center studies have explored public reactions to emergent developments from genetic engineering techniques, automation and more. One field at the forefront of public reaction is the use of gene editing of babies or genetic engineering of animals. Americans have mixed views over whether the use of gene editing to reduce a baby’s risk of serious disease that could occur over their lifetime is appropriate (60%) or is taking medical technology too far (38%), according to a 2018 survey. Similarly, about six-in-ten Americans (57%) said that genetic engineering of animals to grow organs or tissues for humans needing a transplant would be appropriate, while four-in-ten (41%) said it would be taking technology too far.

When we asked Americans about a future where a brain chip implant would give otherwise healthy individuals much improved cognitive abilities, a 69% majority said they were very or somewhat worried about the possibility. By contrast, about half as many (34%) were enthusiastic. Further, as people think about the effects of automation technologies in the workplace, more say automation has brought more harm than help to American workers.

One theme running through our findings on emerging science and technology is that public hesitancy often is tied to concern about the loss of human control, especially if such developments would be at odds with personal, religious and ethical values. In looking across seven developments related to automation and the potential use of biomedical interventions to “enhance” human abilities, Center studies found that proposals that would increase peoples’ control over these technologies were met with greater acceptance.

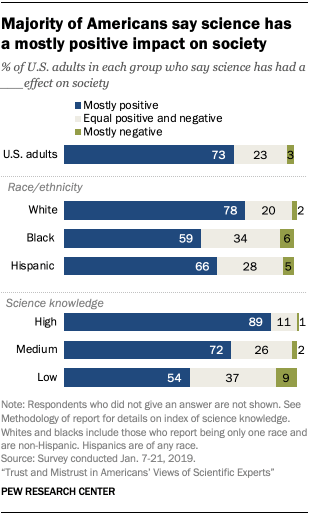

[bignumber]Most in the U.S. see net benefits from science for society, and they expect more ahead. About three-quarters of Americans (73%) say science has, on balance, had a mostly positive effect on society. And 82% expect future scientific developments to yield benefits for society in years to come.

The overall portrait is one of strong public support for the benefits of science to society, though the degree to which Americans embrace this idea differs sizably by race and ethnicity as well as by levels of science knowledge.

Such findings are in line with those of the General Social Survey on the effects of scientific research. In 2018, about three-quarters of Americans (74%) said the benefits of scientific research outweigh any harmful results. Support for scientific research by this measure has been roughly stable since the 1980s.

[bignumber]The share of Americans with confidence in scientists to act in the public interest has increased since 2016. Public confidence in scientists to act in the public interest tilts positive and has increased over the past few years. As of 2019, 35% of Americans report a great deal of confidence in scientists to act in the public interest, up from 21% in 2016.

About half of the public (51%) reports a “fair amount” of confidence in scientists, and just 13% have not too much or no confidence in this group to act in the public interest.

Public trust in scientists by this measure stands in contrast to that for other groups and institutions. One of the hallmarks of the current times has been low trust in government and other institutions. One-in-ten or fewer say they have a great deal of confidence in elected officials (4%) or the news media (9%) to act in the public interest.

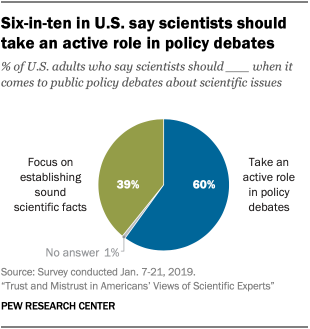

[bignumber]Americans differ over the role and value of scientific experts in policy matters. While confidence in scientists overall tilts positive, people’s perspectives about the role and value of scientific experts on policy issues tends to vary. Six-in-ten U.S. adults believe that scientists should take an active role in policy debates about scientific issues, while about four-in-ten (39%) say, instead, that scientists should focus on establishing sound scientific facts and stay out of such debates.

Democrats are more inclined than Republicans to think scientists should have an active role in science policy matters. Indeed, most Democrats and Democratic-leaning independents (73%) hold this position, compared with 43% of Republicans and GOP leaners.

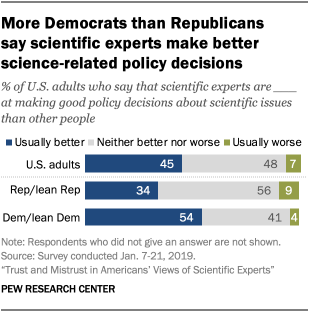

More than four-in-ten U.S. adults (45%) say that scientific experts usually make better policy decisions than other people, while a similar share (48%) says such decisions are neither better nor worse than other people’s and 7% say scientific experts’ decisions are usually worse than other people’s.

Here, too, Democrats tend to hold scientific experts in higher esteem than do Republicans: 54% of Democrats say scientists’ policy decisions are usually better than those of other people, while two-thirds of Republicans (66%) say that scientists’ decisions are either no different from or worse than other people’s.

[bignumber]Factual knowledge alone does not explain public confidence in the scientific method to produce sound conclusions. Overall, a 63% majority of Americans say the scientific method generally produces sound conclusions, while 35% think it can be used to produce “any result a researcher wants.” People’s level of knowledge can influence beliefs about these matters, but it does so through the lens of partisanship, a tendency known as motivated reasoning.

Beliefs about this matter illustrate that science knowledge levels sometimes correlate with public attitudes. But partisanship has a stronger role.

Democrats are more likely to express confidence in the scientific method to produce accurate conclusions than do Republicans, on average. Most Democrats with high levels of science knowledge (86%, based on an 11-item index of factual knowledge questions) say the scientific method generally produces accurate conclusions. By comparison, 52% of Democrats with low science knowledge say this. But science knowledge has little bearing on Republicans’ beliefs about the scientific method.

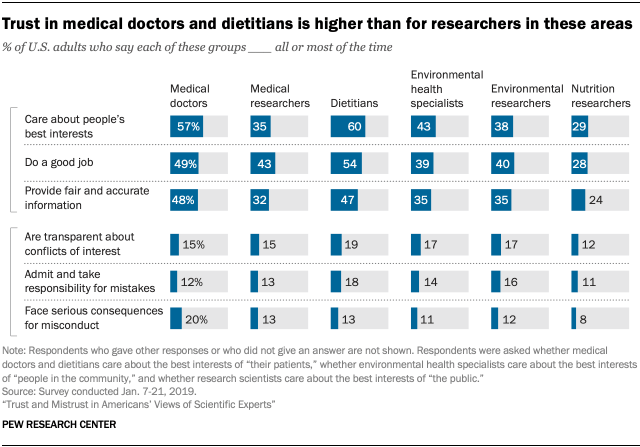

[bignumber]Trust in practitioners like medical doctors and dietitians is stronger than that for researchers in these fields, but skepticism about scientific integrity is widespread. Scientists work in a wide array of fields and specialties. A 2019 Pew Research Center survey found public trust in medical doctors and dietitians to be higher than that for researchers working in these areas. For example, 48% of U.S. adults say that medical doctors give fair and accurate information all or most of the time. By comparison, 32% of U.S. adults say the same about medical research scientists. And six-in-ten Americans say dietitians care about their patients’ best interests all or most of the time, while about half as many (29%) say this about nutrition research scientists with the same frequency.

One factor in public trust of scientists is familiarity with their work. For example, people who were more familiar with what medical science researchers do were more trusting of these researchers to express care or concern for the public interest, to do their job with competence and to provide fair and accurate information. Familiarity with the work of scientists was related to trust for all six specialties we studied.

But when it comes to questions of scientists’ transparency and accountability, most Americans are skeptical. About two-in-ten or fewer U.S. adults say that scientists are transparent about potential conflicts of interest with industry groups all or most of the time. Similar shares (roughly between one-in-ten and two-in-ten) say that scientists admit their mistakes and take responsibility for them all or most of the time.

This data shows clearly that when it comes to questions of transparency and accountability, most in the general public are attuned to the potential for self-serving interests to skew science findings and recommendations. These findings echo calls for increased transparency and accountability across many sectors and industries today.

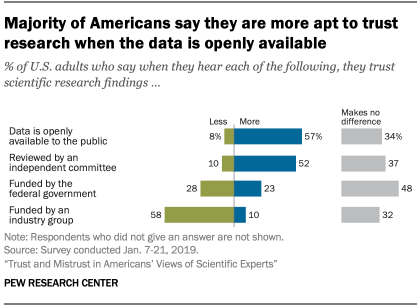

[bignumber]What boosts public trust in scientific research findings? Most say it’s making data openly available. A 57% majority of Americans say they trust scientific research findings more when the data is openly available to the public. And about half of the U.S. public (52%) say they are more likely to trust research that has been independently reviewed.

The question of who funds the research is also consequential for how people think about scientific research. A 58% majority say they have lower trust when research is funded by an industry group. By comparison, about half of Americans (48%) say government funding for research has no particular effect on how much they trust the findings; 28% say this decreases their trust and 23% say it increases their trust.