The COVID-19 vaccination rate offers a rare opportunity for survey researchers to benchmark their data against a high-profile outcome other than an election – in this case, the share of adults who’ve received at least one dose of a coronavirus vaccine as documented by the Centers for Disease Control and Prevention (CDC). This kind of benchmarking helps to shed light on whether polls continue to provide reasonably accurate information about the U.S. public on this subject.

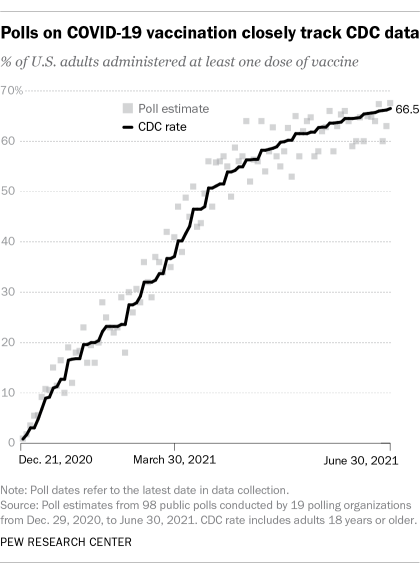

A Pew Research Center analysis finds that public polling on COVID-19 vaccination has tracked the CDC rate fairly closely. Polling estimates of the adult vaccination rate have been within about 2.8 percentage points, on average, of the rate calculated by the CDC. Around a quarter (22%) of the polls differed from the CDC estimate by less than 1 percentage point.

This analysis includes 98 public polls conducted by 19 different polling organizations from Dec. 29, 2020, to June 30, 2021. Researchers gauged the accuracy of each poll by computing the difference between the poll’s published estimate and the vaccination rate from the CDC on the day the poll concluded.

Researchers collected 98 public polls conducted by 19 different pollsters from Dec. 29, 2020, to June 30, 2021. Researchers gauged the accuracy of each poll by computing the difference between the poll’s published estimate and the vaccination rate from the Centers for Disease Control and Prevention (CDC) on the day the poll concluded. CDC numbers were collected from their publicly available API. For a complete list of polls that were included in this analysis, click here.

When polls differed from the CDC rate, they often came in higher rather than lower – but not always. One notable difference between how polling fared for the 2020 presidential election and the COVID-19 vaccination rate is that polling errors regarding the vaccine have been less systematic (i.e., in the same direction). One way to measure systematic error, also known as bias, is by letting polls that underestimated the CDC benchmark cancel out polls that overestimated the benchmark. (Researchers call this computing the “signed” average error across polls.)

In this analysis, once over- and underestimates of the CDC benchmark were allowed to cancel each other out, polls differed from the CDC rate by just +0.3 percentage points on average, with the net result being that the polls almost exactly matched the vaccination rate. For comparison, according to an analysis of national polls conducted in the final two weeks of the 2020 presidential election by the American Association for Public Opinion Research, polls “understated Trump’s share in the certified vote by 3.3 percentage points and overstated Biden’s share in the certified vote by 1.0 percentage point.”

To be sure, the vaccination rate among Americans was increasing while each poll was being conducted, making it difficult to pin down the exact difference in the vaccination rates reported in polling data and the official CDC rates. The median duration of the polls in this analysis was six days. If the polls’ accuracy is judged based on the CDC rate for the mid-date of the data collection, rather than the final date, the average absolute difference would be 3.1 percentage points instead of 2.8. Further complicating the comparisons is the fact that the CDC rates themselves are not necessarily without error because of issues such as delays in jurisdictions reporting vaccinations.

As other research has suggested, poll results may differ from the CDC vaccination rate (and from each other) because of differences in how pollsters asked about vaccine status. The most common type of question in this analysis asks something like “Have you gotten vaccinated for the coronavirus?” with answer options for “Yes” or “No.” Other questions asked respondents if they knew anyone who had been vaccinated and included an answer option for the respondent to say they had received the vaccine themselves. Some pollsters asked if respondents planned to get vaccinated with an option to indicate that they had already received the vaccine. The average absolute difference for the 76 questions using a yes/no format was 2.8 percentage points, compared with 3.0 points for all other questions (22 questions used a different format).

In some ways, the fact that the polling industry has done a better job estimating vaccinations than voting is not surprising. Election polls face challenges that don’t exist for non-election polls measuring public opinion on issues like abortion or immigration. Election polls focus on a future behavior (will you vote? for whom?) and need to screen for respondents who are likely voters.

Another kind of benchmarking involves pollsters asking questions that are also on gold standard, high-response-rate government surveys and comparing their results to the government result. While this is an important and worthwhile exercise, those analyses aren’t comparing a survey to an objective outcome, but rather one survey to another.

Coronavirus vaccinations rates present pollsters with a rare opportunity to compare their results on a high-profile outcome that is both fully available to the U.S. adult population (rather than only citizens and registered voters) and has a known truth that pollsters can compare to. Unlike the polls’ less-than-stellar performance in the 2020 election, the results from this analysis suggest that the polls have done well in tracking growth in the share of adults receiving the vaccines.